Attestiv Included in Google Announcement on AI Content Detection and Media Transparency

Attestiv is proud to be included among the trusted partners supporting this effort.

Home > Press

Attestiv is proud to be included among the trusted partners supporting this effort.

Attestiv’s CEO weighs in as artificial intelligence advances at an exponential pace, making the line between what is real and what is fabricated increasingly difficult to discern.

AI deception has become multi-modal: attackers can combine fabricated photos, videos, audio, messages, and documents to create a false reality

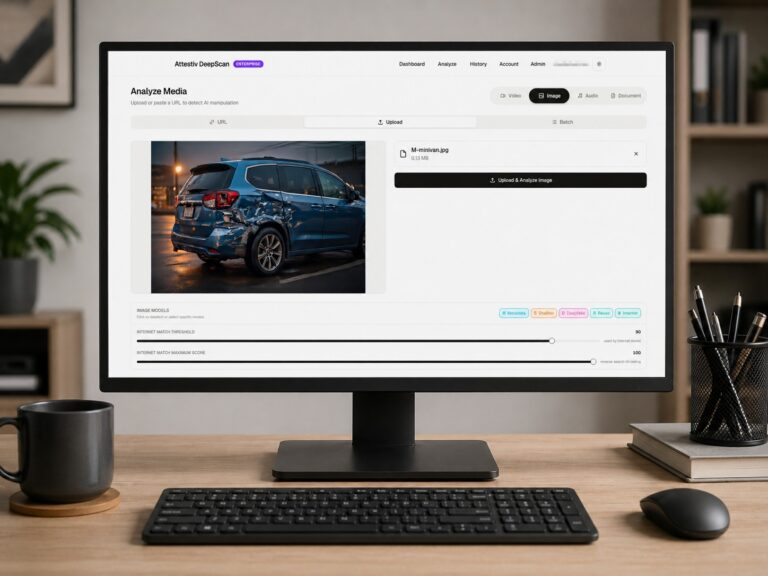

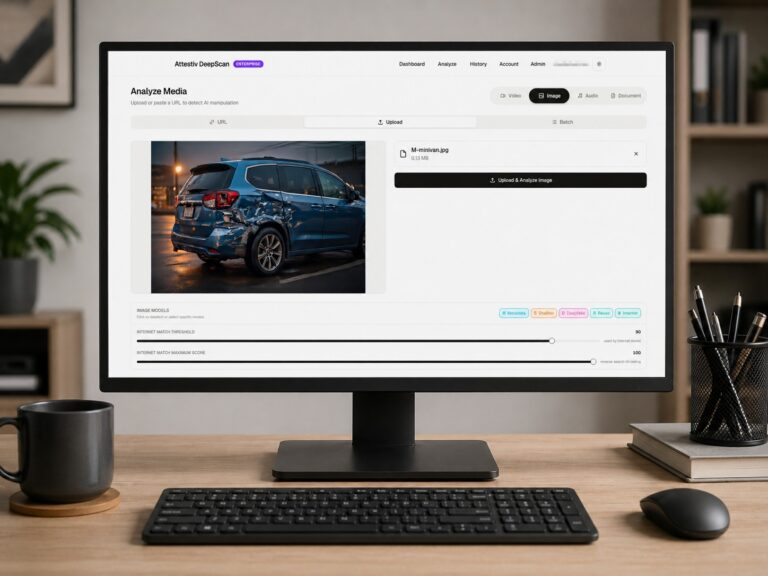

The collaboration integrates real-time media authentication into underwriting and claims workflows to detect synthetic submissions.

ReSource Pro and Attestiv have partnered to help insurers detect and prevent AI-generated fraud with easy-to-integrate solutions.

TechTarget selects Attestiv as one of the top deepfake detection tools to protect enterprise users.

Geo Tv Network and Dubawa fact check viral videos with the help of Attestiv.

Geo Tv Network fact‑checked the video using Attestiv’s AI, confirming the video’s digital manipulation.

Using AI-powered media forensics, Attestiv assigned the clip a tamper score of 93, indicating a high likelihood of AI synthesis—not reality

A viral notification claiming new HEC equivalency rules for DAE holders made headlines—until it was proven false, with the help of Attestiv’s AI-powered forensic analysis.