Why Deepfake Detection Belongs in Your Cybersecurity Playbook

It’s easier than ever for attackers to impersonate individuals, forge documents, or create deepfake media that looks and sounds real

Home > Digital media authenticity > Page 3

It’s easier than ever for attackers to impersonate individuals, forge documents, or create deepfake media that looks and sounds real

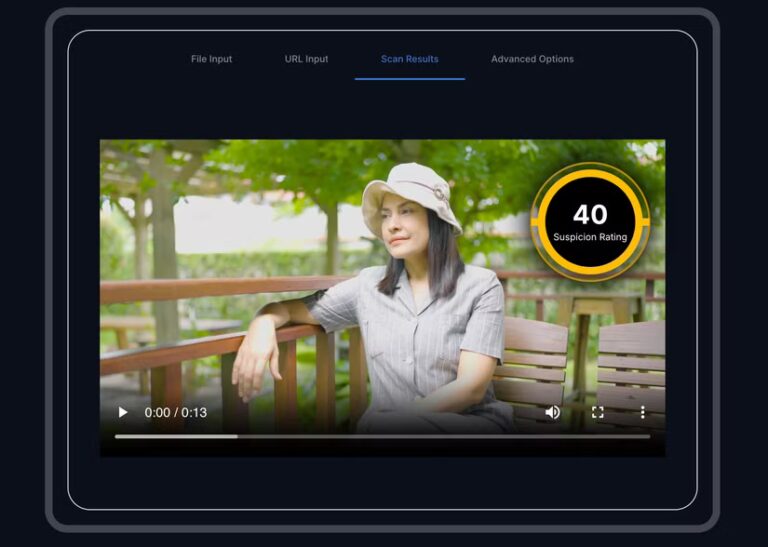

A shocking video surfaced showing a woman drowning in a flooded road — but, through Attestiv, PesaCheck confirmed it was AI-generated

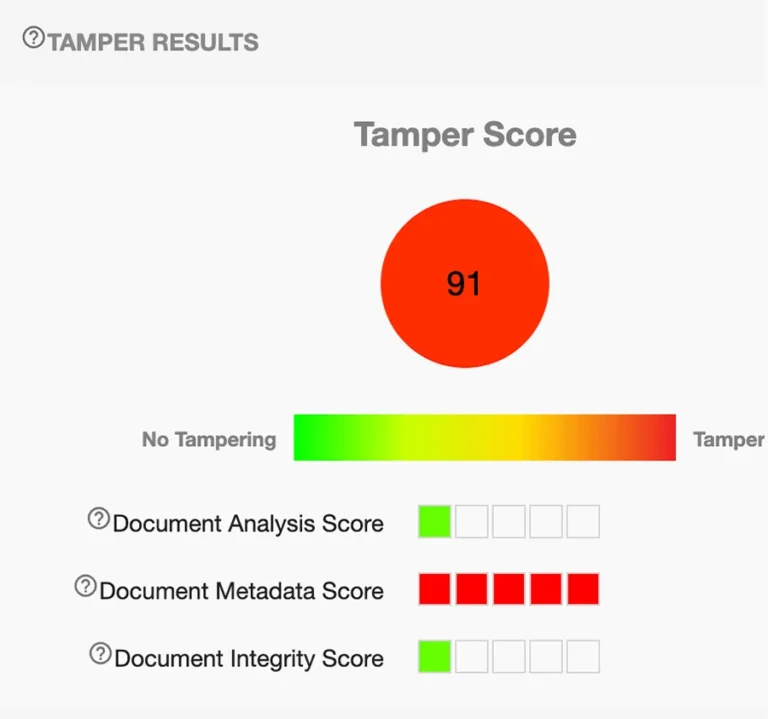

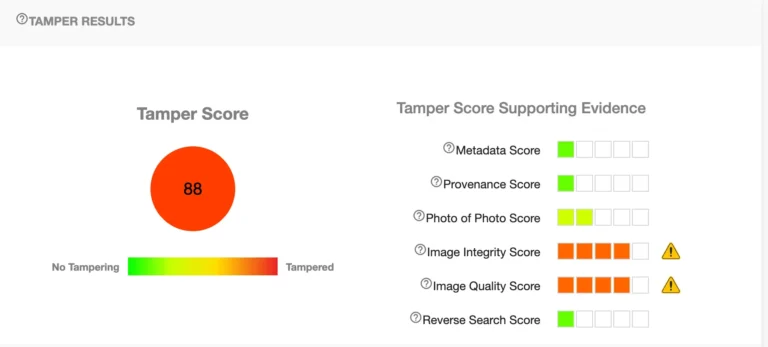

Attestiv document analysis indicates a high likelihood that the content is not authentic

Deepfakes and AI‑enabled deception pose serious challenges to insurance claims and digital trust

Attestiv chosen as one of the 4 most accessible and sophisticated tools for deepfake detection

Both Attestiv and Hive Moderation found the video to not be authentic

Using Attestiv, a video claiming to show an Iranian missile hitting Tel Aviv was quickly exposed as AI-generated disinformation.

A recent Dark Reading article revealed how researchers used replay attacks to bypass leading deepfake detection systems. What does this mean for deepfake detectors?

Attestiv Video helps debunk another AI-generated politi-fake.

AI-generated impersonations are targeting everyday professionals — from job applicants and HR recruiters to financial advisors and insurance reps — and the consequences are both personal and business-critical.