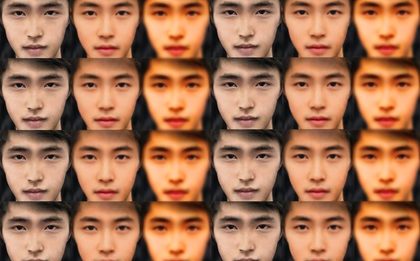

Attack of the Deepfakes

As deepfake videos become easier to produce, the age-old cliché of ‘seeing is believing’ has been fully upended. What can be done to ensure trust?

Why is Blockchain a Polarizing Technology?

Blockchain elicits bifurcated views about its utility. Looking beyond cryptocurrency, are there use cases for blockchain in the enterprise?

The Intersection of Digital Transformation and Blockchain

Using the convenience of mobile phone usage with the security of blockchain.

How reliant are we on photos and videos? Look no further than the Super Bowl

The expectation that videos and photos are reliable, accurate and authentic is now facing increased scrutiny.

Why the value of cryptocurrency does not diminish the value of distributed ledger technology

DLT technology will become a staple for many organizations over the next several years as benefits become evident.